Automate more, faster, with Test Modeller – TMX Integration

The QA community has been speaking about functional test automation for a long time now, but automated test execution rates remain too low. A major...

Design Complex Systems, Create Visual Models, Collaborate on Requirements, Eradicate Bugs and Deliver Quality!

| Product Overview | Solutions |

| Success Stories | Integrations |

| Book a Demo | Release Notes |

| Free Trial | Brochure |

| Pricing |

Our innovative solutions help you deliver quality software earlier, and at less cost!

![]() AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

![]() Test Case Design Generate the smallest set of test cases needed to test complex systems.

Test Case Design Generate the smallest set of test cases needed to test complex systems.

![]() Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

![]() API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

![]() Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

![]() Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

![]() Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

![]() Data Masking Identify and mask sensitive information across databases and files.

Data Masking Identify and mask sensitive information across databases and files.

![]() Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

See how we empower customer success, watch our latest webinars, read our newest eBooks and more.

![]() Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Help & Support Find a solution, request expert support and contact Curiosity.

Help & Support Find a solution, request expert support and contact Curiosity.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

![]() Integrations Explore Modeller's wide range of connections and integrations.

Integrations Explore Modeller's wide range of connections and integrations.

Curiosity are your partners for designing and building complex systems in short sprints!

![]() Meet Our Team Meet our team of world leading experts in software quality and test data.

Meet Our Team Meet our team of world leading experts in software quality and test data.

![]() Our History Explore Curiosity's long history of creating market-defining solutions and success.

Our History Explore Curiosity's long history of creating market-defining solutions and success.

![]() Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

![]() Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

![]() Careers Join our growing team of industry veterans, experts, innovators and specialists.

Careers Join our growing team of industry veterans, experts, innovators and specialists.

![]() Press Releases Read the latest Curiosity news and company updates.

Press Releases Read the latest Curiosity news and company updates.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Contact Us Get in touch with a Curiosity expert or leave us a message.

Contact Us Get in touch with a Curiosity expert or leave us a message.

Test teams today are striving to automate more in order to test ever-more complex systems within ever-shorter iterations. However, the rate of test automation remains low, while automation does not always focus where it can save the most time, and deliver the greatest value.

Below, some common misconceptions regarding test automation are considered, offering an alternative approach for rigorous test automation that can keep up with fast-changing systems.

This would be true if test automation required deep technical skills and coding experience, but it doesn’t. Scriptless frameworks and keyword driven approaches offer ways to automate tests without hours of complex scripting. The challenge is creating a framework with enough granularity to test your complex systems, without rendering it just as complex as scripting from scratch.

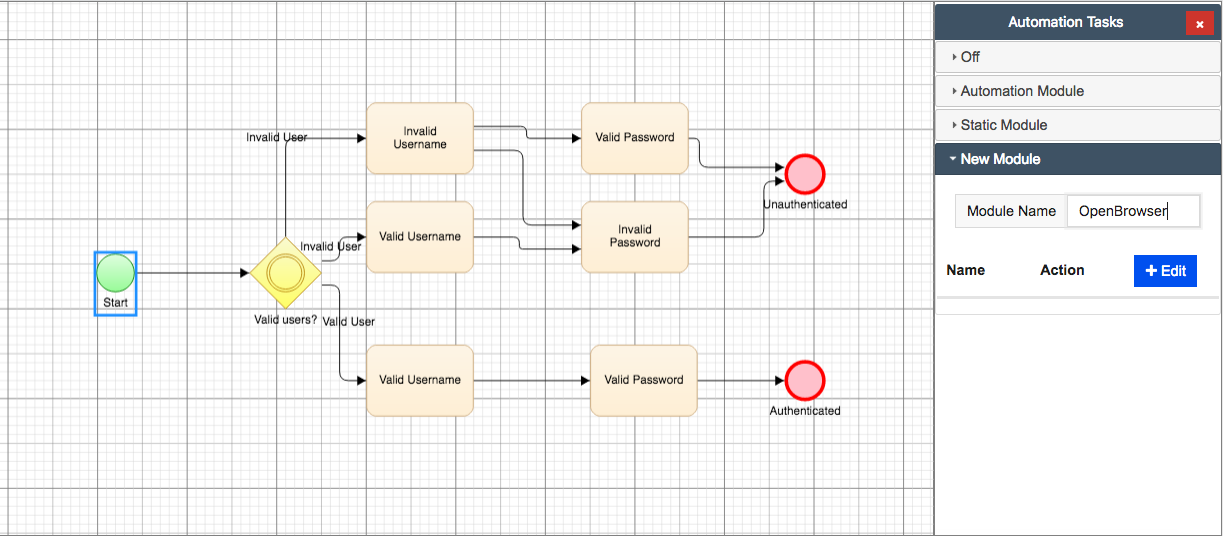

Model Based approaches offer an intuitive and visual approach to capture a system’s logic accurately, while low code techniques make it possible to move from visual models to fully automated tests. The VIP Test Modeller, for instance, provides a UI Recorder to automatically convert manual test activity into models, complete with the data and message activity needed to automate them.

Generating automated tests from easy-to-use visual models. Re-usable subprocesses can

be dragged-and-dropped to intuitive flowchart models in The VIP Test Modeller.

The object scanner similarly scrapes screens for all the information needed for automated testing, using a keyword, drop-down approach to overlay the page objects to visual flowcharts. You can move from manual test activity to automated test in minutes, while common system components that have been modelled once can be quickly dragged-and-dropped to new models, generating a new set of automated tests automatically.

This myth assumes there’s no cognitive or exploratory dimension to test automation, and that automated testing is only good for verifying what you already know and expect.

The reality is, it all depends on how you drive automation. If slowly and manually converting regression tests to scripts one-by-one, you won’t see any improvement in coverage or learning. Likewise, if the tests that you are automating are no good, the automated tests won’t be any good either.

Model Driven Development again offers an alternative. Importing existing tests or data to mathematical precise models enables the application of combinatorial techniques, generating automated tests that cover the maximum proportion of a system’s logic based on the information contained in existing tests.

This quickly moves from existing ‘checks’ to exercising new areas of functionality, potentially uncovering further information. If a close feed feedback loop in turn feeds the information back into the model, the known information about the system increases with it, providing greater learning, visibility, and testing confidence.

This is a common pitfall that can introduce more time-consuming manual effort than is saved by automating test execution.

Adopting test execution automation only reduces manual effort during one phase of testing, and can introduce further manual efforts such as scripting or keyword configuration. It further leaves many time-consuming, repetitious tasks untouched, from refreshing test data and spinning up environments, to updating fields across test and project management tools.

For testing to keep pace with short development iterations, a greater degree of end-to-end automation is required.

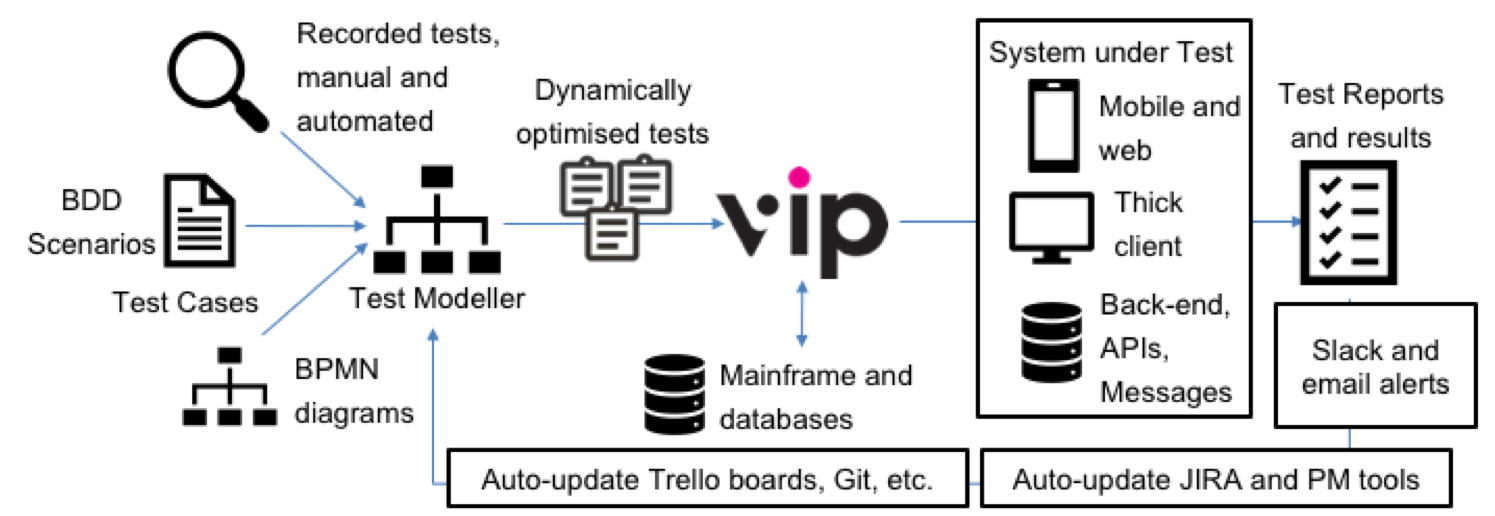

Robotic Process Automation (RPA) is one growing trend in testing, with TestDev teams looking to technologies that arose in the world of operations to operate the repetitive, rule-based processes that slow them down. RPA focuses on introducing non-invasive bots to reliably handle repeated processes, and can automate the TestOps tasks that slow teams down.

Combining automation of TestDev tasks with Robotic Process Automation for end-to-end

test automation.

Meanwhile, a greater degree of TestDev tasks can be automated, and Model Based approaches provide one way to automatically generate test cases from easy-to-maintain models. If test data and virtual services are further defined at the model level, then one centralised resource can be re-used to automate test design, data and environment provisioning, and test execution.

Manual automated test maintenance is one of the biggest bottlenecks that automating test execution can introduce. Test scripts rack up but without traceability to the system code or business requirements. When a new release arrives, the scripts must be checked and updated one by one, before new data and environments are provisioned. Quickly, the time spent on maintenance outweighs the time available in a sprint to test new or critical functionality.

This again comes back to the question of how automated tests were created originally.

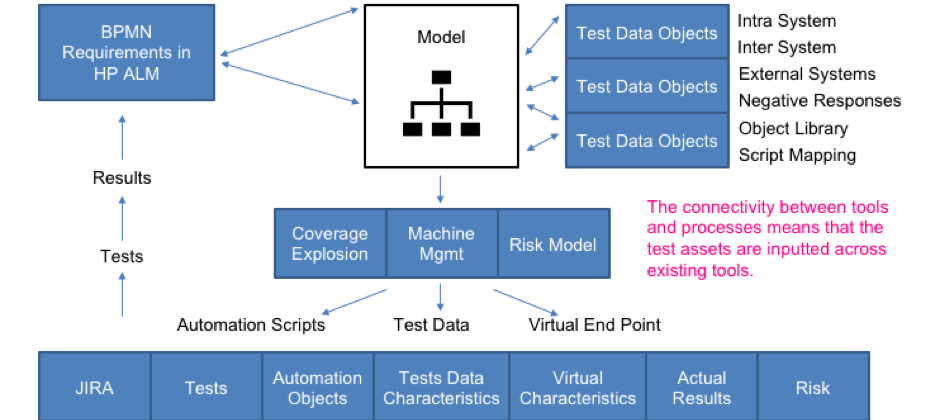

Generating automated tests, data, and virtual services from the same model creates a single, central test artifact – update that, and you maintain all your test assets in one fell swoop. The model can further be linked directly to requirements, for instance by overlaying the automation logic onto business process diagrams created during the requirements phase. Test artifacts can then be automatically maintained as systems evolve, quickly and vigorously testing any changes made during an iteration.

Test teams today are faced with complex systems, spanning legacy systems, the mainframe and databases, numerous APIs and integrated technologies, and whatever technologies their users prefer. Meanwhile, they must reckon with the sprawling technologies used by internal teams, executing tests across a range of platforms and updating information across numerous tools.

It is not possible to automate every test and every end-to-end process in one go. A better approach starts off small, picking off largely self-contained components to automate first. If the automation is re-usable, these small islands of quality testing can then be connected into higher-level components, building to rigorous end-to-end testing of complex systems.

Model Based test automation from The VIP Test Modeller facilitates such re-usability. Models and all associated automation logic and data are stored in a central workspace, from which they can quickly be dragged-and-dropped to visual models. The more you automate, the quicker automation gets, while enabling the combination of existing tests into complex, end-to-end tests.

Central models connect DevOps frameworks and continuous delivery pipelines.

Meanwhile, connecting sprawling, existing technologies drives up the automation of TestOps tasks. High-performance bots can increasingly execute the repetitious, process-driven tasks like updating information across files, databases, and the range of tools in use at an organization. Again, it’s best to start off small, using workflow automation to automate across technologies with integrations in place, before focusing on technologies with good APIs. You can then build up to end-to-end automation of test and development tasks.

With this alternative approach, not only can greater test execution automation be increased, but the tasks surrounding execution are automated and optimised for testing quality. Meanwhile, automation extends to the TestOps tasks that slow teams down, using a Model-Based approach to facilitate rigorous test automation that keeps up with fast-changing systems.

Get in touch if you have any questions or would like to discuss how Test Modeller can help drive you with your test automation projects.

The QA community has been speaking about functional test automation for a long time now, but automated test execution rates remain too low. A major...

The 2020/1 edition of the World Quality Report (WQR) highlights how the expectation placed on test teams has been growing steadily. QA teams today...

This is Part 3/3 of “Introducing “Functional Performance Testing”, a series of articles considering how to test automatically across multi-tier...

The QA community has been buzzing this past month as its members and vendors respond to Angie Jones’ insightful article, 10 features every codeless...

Each year, organisations and consumers globally depend on Oracle FLEXCUBE to process an estimated 26 Billion banking transactions [1]. For...

Despite increasing investment in test automation, many organisations today are yet to overcome the barrier to successful automated testing. In fact,...

Banks globally rely on Oracle FLEXCUBE to provide their agile banking infrastructure, and more today are migrating to FLEXCUBE to retain a...

This is Part 1/3 of “Introducing “Functional Performance Testing”, a series of articles considering how to test automatically across multi-tier...

This is Part 2/3 of “Introducing “Functional Performance Testing”, a series of articles considering how to test automatically across multi-tier...