Containers for Continuous Testing

Application development and testing has been revolutionised in the past several years with artifact and package repositories, enabling delivery of...

Design Complex Systems, Create Visual Models, Collaborate on Requirements, Eradicate Bugs and Deliver Quality!

| Product Overview | Solutions |

| Success Stories | Integrations |

| Book a Demo | Release Notes |

| Free Trial | Brochure |

| Pricing |

Our innovative solutions help you deliver quality software earlier, and at less cost!

![]() AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

![]() Test Case Design Generate the smallest set of test cases needed to test complex systems.

Test Case Design Generate the smallest set of test cases needed to test complex systems.

![]() Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

![]() API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

![]() Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

![]() Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

![]() Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

![]() Data Masking Identify and mask sensitive information across databases and files.

Data Masking Identify and mask sensitive information across databases and files.

![]() Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

See how we empower customer success, watch our latest webinars, read our newest eBooks and more.

![]() Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Help & Support Find a solution, request expert support and contact Curiosity.

Help & Support Find a solution, request expert support and contact Curiosity.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

![]() Integrations Explore Modeller's wide range of connections and integrations.

Integrations Explore Modeller's wide range of connections and integrations.

Curiosity are your partners for designing and building complex systems in short sprints!

![]() Meet Our Team Meet our team of world leading experts in software quality and test data.

Meet Our Team Meet our team of world leading experts in software quality and test data.

![]() Our History Explore Curiosity's long history of creating market-defining solutions and success.

Our History Explore Curiosity's long history of creating market-defining solutions and success.

![]() Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

![]() Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

![]() Careers Join our growing team of industry veterans, experts, innovators and specialists.

Careers Join our growing team of industry veterans, experts, innovators and specialists.

![]() Press Releases Read the latest Curiosity news and company updates.

Press Releases Read the latest Curiosity news and company updates.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Contact Us Get in touch with a Curiosity expert or leave us a message.

Contact Us Get in touch with a Curiosity expert or leave us a message.

Behaviour-Driven Development (BDD) emerged in 2006 [1], partly in response to perennial test and development painpoints lingering in spite of “agile” methodologies. A ubiquitous language shared across design, development, and QA would avoid the frustration of miscommunication, and the defects it perpetuates.

Test and development would instead be a steady journey of mastering the system being built, rather than a wild goose chase of finding out too late that you’ve misunderstood the desired system. Engineers meanwhile would know exactly what to prioritise when updating fast-changing systems within short iterations.

BDD has since gown widespread in the QA community, and is now a commonplace concept at large banks and financial organisations, for instance.

However, “doing BDD” for these teams often means using a specification language like Gherkin to formulate scenarios, and a test automation framework like Cucumber to execute them as tests. Many of the procedural and principled challenges that BDD sought to uproot remain, with automation frameworks and specification languages serving as point solutions to individual problems:

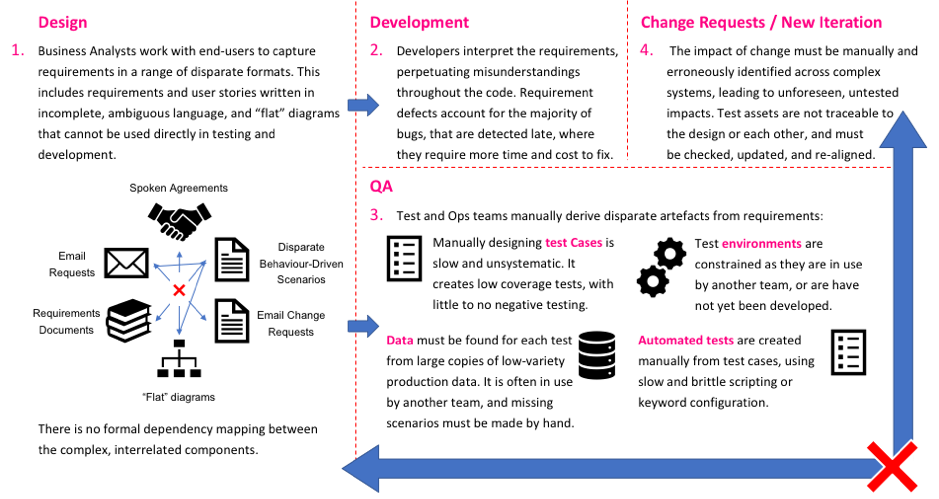

Common test and development bottlenecks persist in “mini-Waterfalls”.

Miscommunications, defects, and bottlenecks persist, stemming from poorly specified requirements and a siloed approach. The bugs are further still detected late, by overly manual QA that starts late. Considered from the perspective of people, process, and technology, numerous pre-’BDD’ problems persist:

Organisations often still design, code, and test systems – in that order. Miscommunications and delays creep in at each stage, as teams wait for the information they need to fulfil their siloed roles to be passed on.

The difference is that teams try to squeeze all this into six week “mini-Waterfalls”, rather than 18 month projects. QA is pushed ever-later, rolling constantly over to the next iteration and leaving the bulk of a system exposed to defects.

Fragmentary user stories have replaced the monolithic requirements documents that stood at the start of Waterfall projects. These disparate scenarios are individually incomplete, but are not connected formally into a complete system either.

The natural language used to formulate Behaviour-Driven scenarios is furthermore good for designing UIs and front-end systems, but poorly suited for machine logic and APIs. It also tends to be ambiguous and logically imprecise, so that the majority of defects that can be traced back to requirements remain.

Automated tests derived from Behaviour-Driven scenarios are naturally incomplete, focusing on the “happy path” scenarios that are specified as desired user behaviour.

QA therefore leaves much of the system’s logic exposed to defects, and particularly the negative scenarios that are most likely to cause more severe defects. At the same time, however, certain logic will be repetitiously over-tested, by virtue of the linear nature of Behaviour-Driven Scenarios that are not consolidated during testing.

QA teams often still rely on centralised, overworked Ops teams to copy complex production data and spin up environments. Further time is therefore wasted as testers wait for data and environments to be provisioned, or wait for limited resources to be used by another team.

The large copies of masked production data are furthermore low-variety, containing only the expected scenarios that have occurred in the past. They do not contain the outliers and unexpected results needed for rigorous testing, and by definition cannot test functionality not yet released to production.

The disparate, unconnected scenarios further lack formal dependency mapping. This means that the upstream and downstream impact of new stories or change requests must be identified manually across increasingly complex systems.

Low-priority changes in turn throw up system-wide defects, while test cases, data, and automated tests must all be updated manually to reflect the latest changes. This manual maintenance is slow and repetitious, and can quickly swallow up whole iterations at the expense of testing new or critical functionality.

This siloed, over-manually approach stands in contrast to the original principles of BDD. It undermines parallelism and the ability to move desired user behavior quickly from design to deployment.

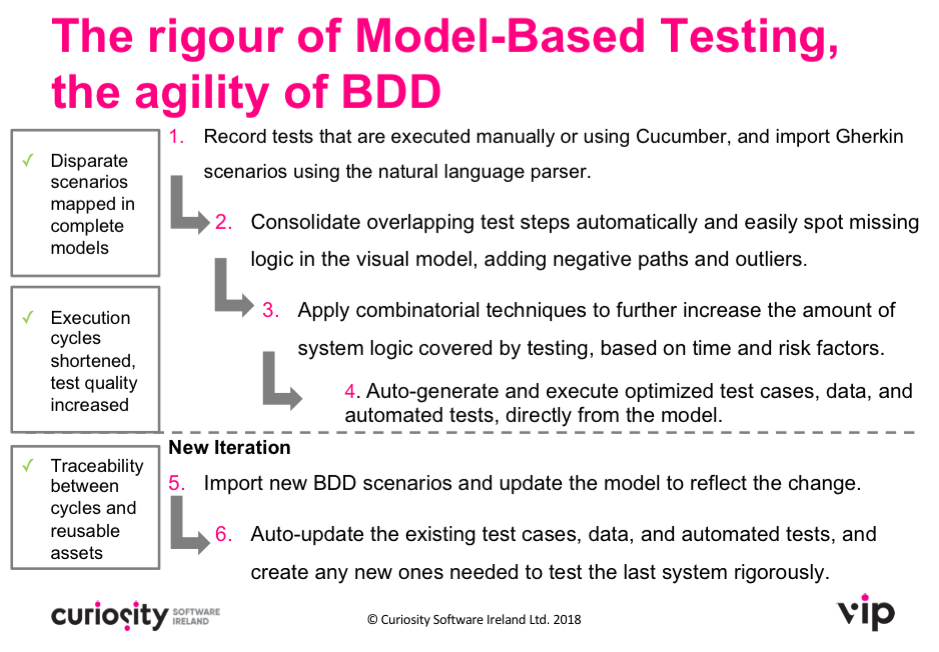

“Model-Driven Development” offers an alternative approach that retains BDD’s flexibility to fast-changing user needs, while introducing a greater degree of rigour and automation. As the below video demonstrates, QA teams can move automatically from existing artefacts, to the complete models needed for automated and optimised testing:

This approach builds on existing tests and Behaviour-Driven scenarios to combine the principles of BDD with the rigour of Model-Based Testing. It enables collaborative business and engineering teams to:

It is possible to build complete, formal models within short iterations. Behaviour-Driven requirements and existing tests can be converted automatically to flowcharts, for instance, using Gherkin importers and a UI Recorder.

Flowcharts are already familiar to BAs, many of whom already use Business Process Models. The models therefore retain a “ubiquitous language”, while developers and QA can work in parallel from the same system design.

This facilitates a “shift left” approach where testers help to build better quality, testable systems, removing potentially costly defects during the design phase. The flowcharts have the local precision needed to eradicate design ambiguities, while incompleteness is more easily spotted at the model-level.

The Model-Driven Approach is also Test-Driven, with automated tests and data derivable directly from the flowcharts. QA then becomes a largely automated comparison of the designs to the code, working in parallel with requirements gatherers.

This not only eradicates the bottlenecks associated with manual test case design, but drives up the quality of testing. Automated coverage techniques generate the smallest set of test cases needed to exhaustively test the requirements model, while Risk-Based approaches can target new or critical functionality based on time and test history.

The automated tests and test data have been derived directly from the system designs, and are therefore traceable directly to them. This enables automated test maintenance: as the central designs change, the rigorous automated tests update automatically. QA can therefore focus on developing test assets for newly introduced functionality, testing fast-changing systems rigorously.

Full dependency mapping meanwhile means that when the designs are updated, developers can know exactly what across complex systems has been affected, implementing the change reliably and without potentially catastrophic unforeseen consequences.

Model-Based Testing can facilitate a truly Behaviour-Driven approach, capable of delivering rigorously tested software within short iterations. It retains all the agility of Behaviour-Driven development, while introducing the rigour of Model-Based Testing to short iterations:

Model-Driven Development: An example iteration combining Behaviour-Driven

Development and Model-Based Testing.

If you would like to find out more or arrange a demo, please feel free to get in touch on info@curiosity.software

[1] Dan North & Associates (2006), Introducing BDD.

[Image: Pixabay]

Application development and testing has been revolutionised in the past several years with artifact and package repositories, enabling delivery of...

In the digital age, large enterprises are plagued by a lack of understanding of their legacy systems and processes. Knowledge becomes isolated in...

Test automation must be lightweight, re-usable and easy to apply, in order to help organisations, ease its implementation enterprise wide. Curiosity...

Behaviour Driven Development is, at its heart, about communication. It’s not about using Gherkin to formulate specifications, or Cucumber to run...

Software development has been revolutionized by new methodologies and practices. The software industry has moved from sequential waterfall...

Low-code development has created a population of “Citizen Developers”, enabling organizations to deliver IT solutions at incredible speeds. However, ...

Welcome to the final instalment of 5 Reasons to Model During QA! If you have missed any of the previous four articles, jump back in to find out how...

Okay, so that title doesn’t make complete sense. However, if you read to the end of this article, all will become clear. I’m first going to discuss...

Software delivery teams across the industry have embraced new(ish) approaches to development, from the different flavours of agile, to DevOps,...