Curiosity's Enterprise Test Data

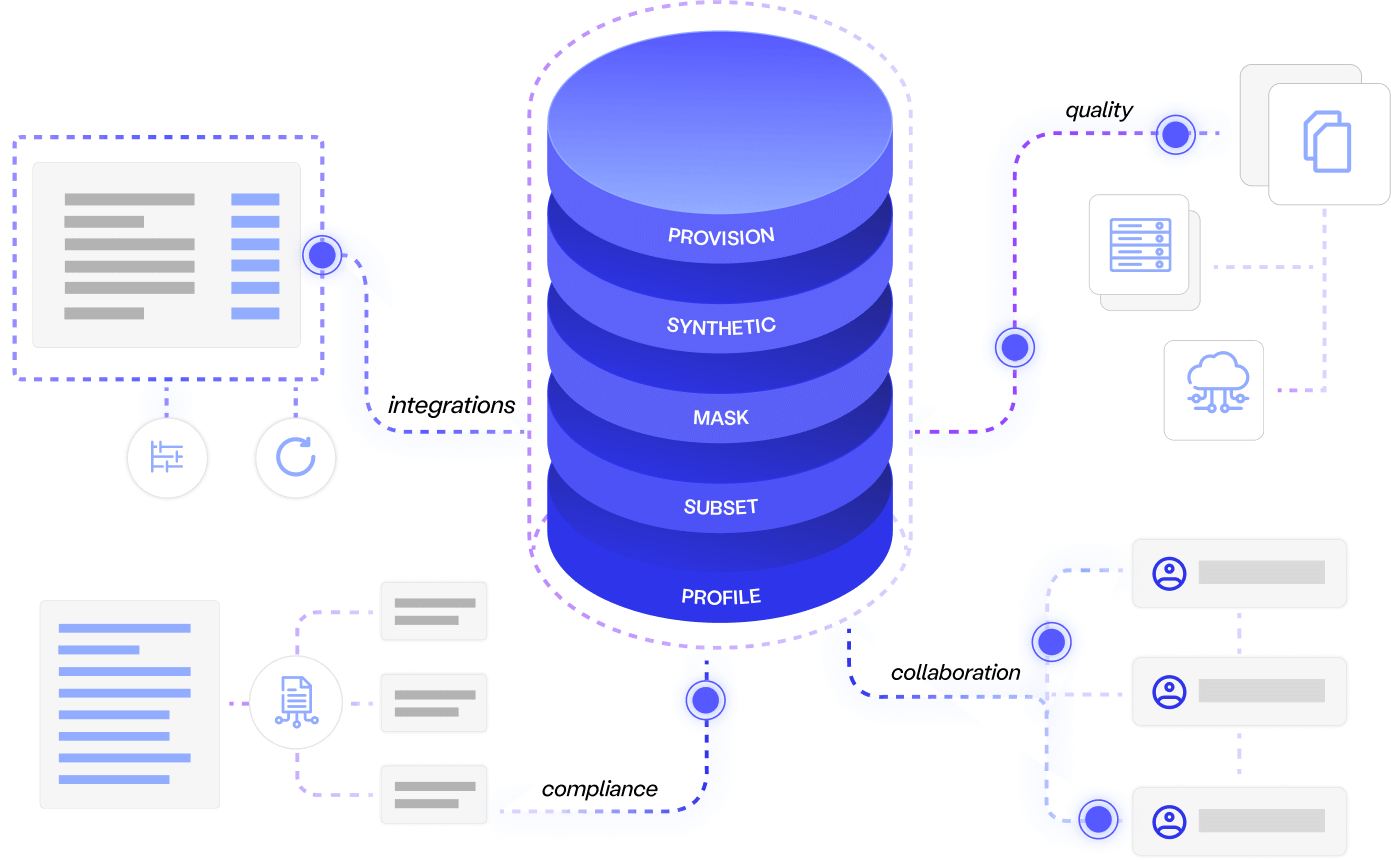

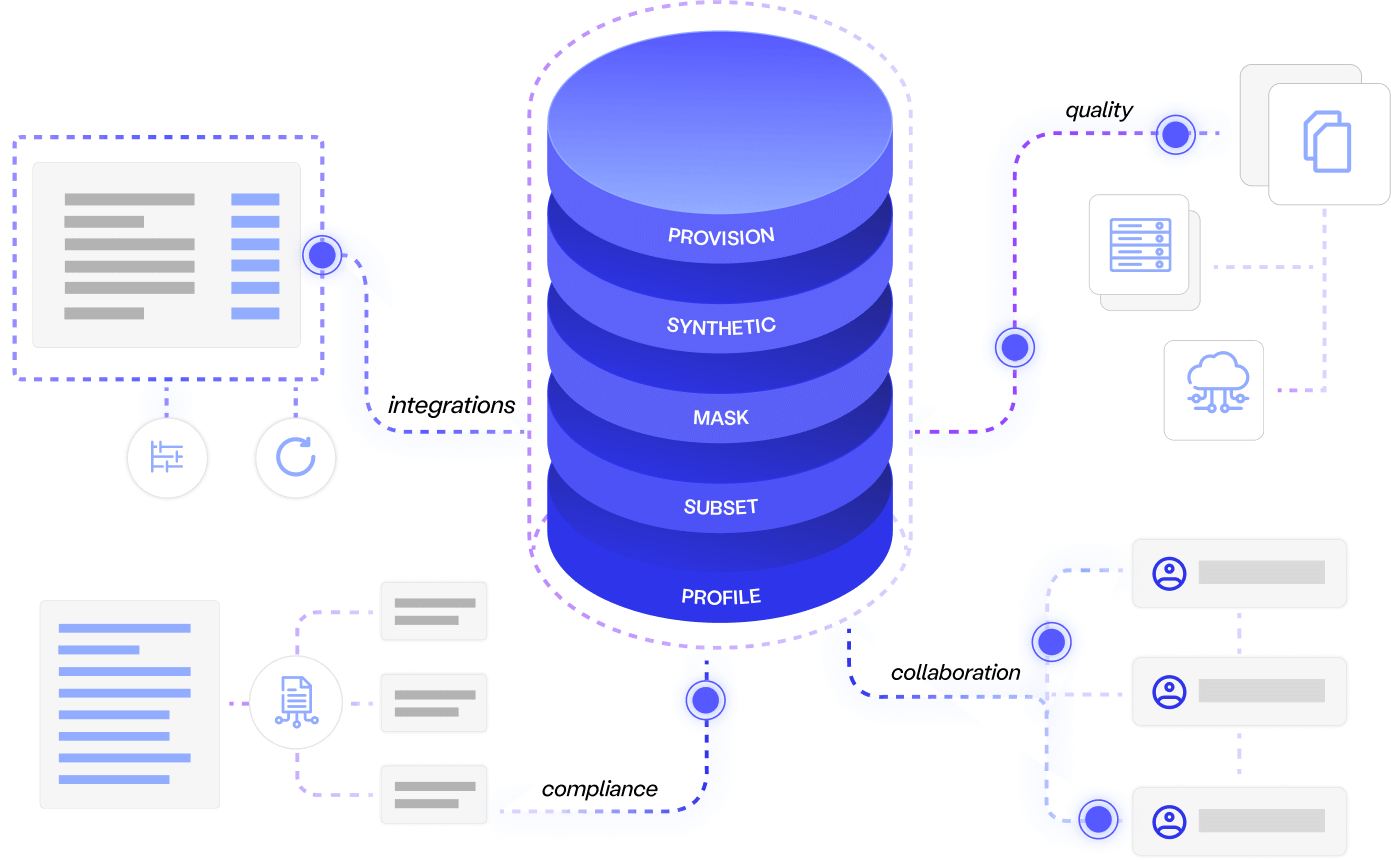

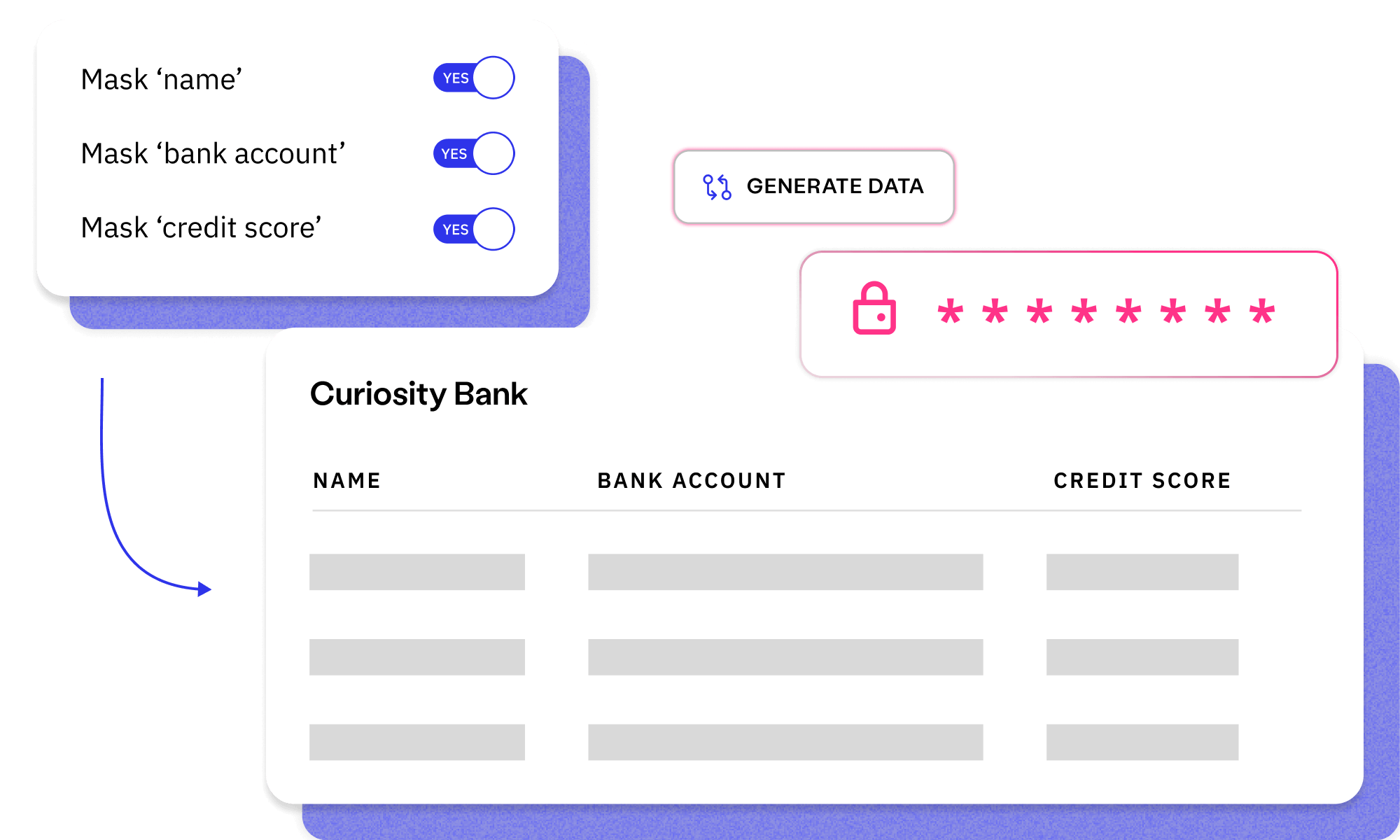

Discover the all-in-one platform for enterprise test data needs. Understand complex data, remove...

Read more about Curiosity's Enterprise Test Data Learn MoreAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

Provide the upfront tests, data and requirements needed to avoid bugs, delays and budget overrun.

When embarking on a migration, ask yourself:

Do you understand the legacy system’s data?

Do you understand the new system in detail? And is that knowledge stored in requirements for future?

Do you have compliant data for testing every transform and requirement?

Are you testing early enough to give yourself time to fix and pivot?

Fortunately, a unified solution can fulfil each of these criteria. This integrated approach ensures that your teams have the upfront requirements, understanding and data needed for a successful migration!

Read the whitepaper to learn more!

Today, the majority of enterprises are engaged in ongoing system migrations – and most of those projects will either fail completely, overrun on time, or exceed their budget. Too often, key failings during data migrations combine with insufficient testing and design. This leads to compliance risks and costly bugs, which are often only identified once it’s too late.

This eBook will outline a unified solution for solving these failings. The integrated approach will combine data analysis and archaeology, with automated test generation and requirements modelling. This provides the data, requirements and understanding needed for a successful data migration, while moving “shift left” testing far earlier in the migration project.

This approach not only supports a successful data migration: It provides the “living documentation” and rigorous in-sprint testing needed to innovate the migrated system. The eBook will therefore conclude by summarising how the proposed approach “future proofs” development of migrated systems, including the eventual migration to subsequent systems.

Leveraging a checklist of four criteria during data migration planning helps address quality requirements, understanding, cost and regulatory compliance risks.

A unified solution to design and testing provides the upfront requirements, understanding and data needed for a successful data migration.

Automated data analysis can provide the requisite understanding of system data, informing refined requirements that generate complete data for "shift left" migration testing.

Read a free resource, or talk to an expert, to learn how you can embed productivity and quality throughout your software delivery ecosystem.

Discover the all-in-one platform for enterprise test data needs. Understand complex data, remove...

Read more about Curiosity's Enterprise Test Data Learn More

Self-service generation removed 5-day provisioning bottlenecks, hitting the volumes and variety...

Read more about Data generation at a large financial institution Read the full story

Talk to us to learn how Enterprise Test Data can embed productivity, quality and privacy across...

Read more about Meet with a Curiosity expert Book now