Automated Solr data provisioning

Populate, mask, subset and generate Solr data on demand

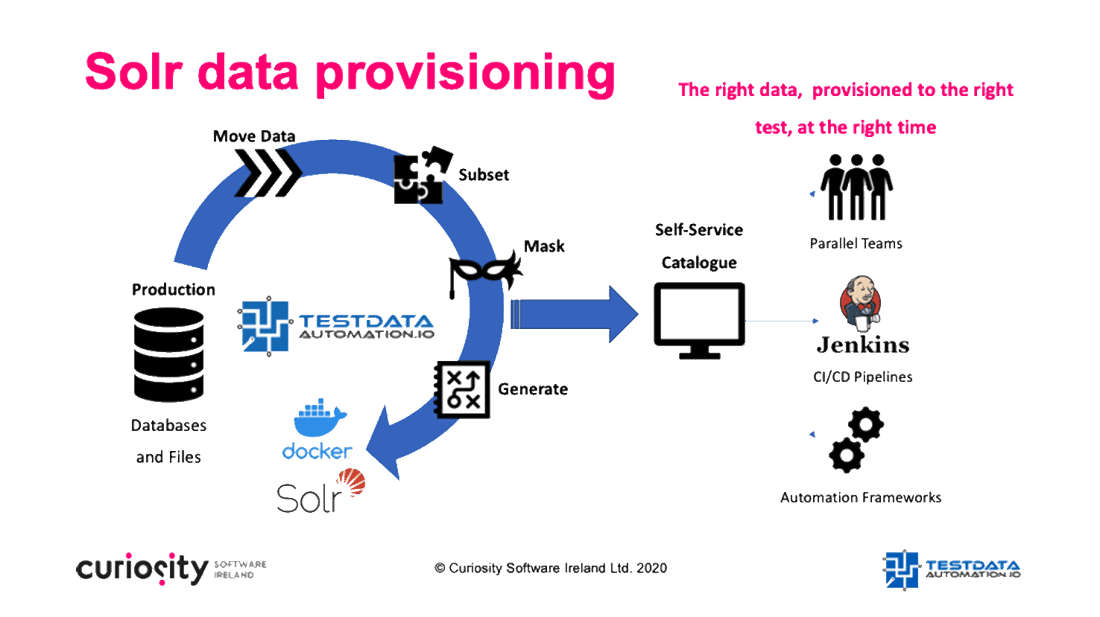

Populate high volumes of rich Solr data into test environments on demand, rigorously testing fast-changing systems. Test Data Automation combines flexible masking, subsetting and generation with self-service provisioning, all integrated seamlessly into DevOps toolchains.

Your searches are speedy – but how fast is your testing?

Apache Solr provides a cutting-edge, open source data search and indexing technology, forming the backbone of business critical and customer facing systems today. However, Solr’s capacity to process vast quantities of complex data in real time presents a complex test data requirement. QA require test rich data with which to validate the expected production scenarios, as well as the greater quantities of data combinations needed to test against negative scenarios and unexpected results.

This varied data must be kept up-to-date at the speed of iterative development, and must be provisioned within existing DevOps toolchains. It must furthermore often be masked before it reaches QA environments to avoid legislative non-compliance, while QA teams require quick-to-use techniques for finding coherent data for exact test scenarios. Typically, that spells bottlenecks stemming from complex data provisioning and searching for data, while bugs damage production systems because QA has access to a fraction of the combinations they need.

Rich Solr data, available on demand and in parallel

Test Data Automation provides a simple, automated and on demand approach to populating rich Solr data in QA environments, manipulating it on-the-fly to enable rigorous testing and test compliance at speed. High-speed, parallelized workflows move high-volume data from a range of sources to Solr and Docker containers in seconds and are available on demand from a self-service web portal. Parallel test and development teams can request the rich Solr data they need to ensure that systems delivery valuable insights, unconstrained by the delays associated with slow and manual data provisioning.

The rapid data loads weave in the flexible test data processes needed to ensure that QA is rigorous and compliant, testing business-critical systems without the risk of exposing sensitive data. Rapid and reliable data masking anonymizes data as its moved to Solr on demand, while “criteria” subsetting can be used to request exact data combinations needed to fulfil specific test scenarios. “Covered” subsets and seamlessly synthetic test data generation enhance the quality of data as it’s moved to Solr, including model-based data generation to ensure that tests execute every positive and negative data journey.

Configuring the utilities is as quick and simple as populating a fill-in-the-blanks spreadsheet and exposing the process to the on demand data portal, while data can be pushed to Docker containers, resolved dynamically during automated test execution, and triggered as part of CI/CD pipelines. The result? “Agile” test data available in parallel and on demand, with testing that matches the speed of your Solr searches.

Quality and compliant testing, at the speed of your development

These short examples showcase rapid and easy-to-use masking, subsetting and generation for SQL data as it’s moved automatically to Solr and Docker containers. Watch the short demos of producing rich and compliant data to test graphs generated via a Solr Core, and see how:

-

A collaborative web portal rapidly moves, masks, subsets and generates data into Solr and Docker containers, using a simple fill-in-the-blanks form to trigger automated jobs.

-

High-speed parallelized data loads move 231,000 rows of data rapidly to Solr, requiring only a few seconds and a few clicks.

-

High-performance loads can be performed in tandem with flexible data masking, generation and subsetting, enabling complete Solr test data management.

-

Configuring data subsets, generates and masks is as quick and simple as filling in blanks in an easy-to-use configuration spreadsheet, exposing the re-usable jobs to the central web portal.

-

Masking data as it is moved to Solr and Docker containers reduces compliance risk, while still providing testers and data with the data they need, exactly when and where they need it.

-

Subsetting Solr data as it is moved rapidly to test and development environments provisions exactly the data needed to fulfil a complete set of test scenarios.

-

“Criteria” subsets provide data to fulfil test scenarios on demand, removing the bottlenecks associated with manual data provisioning, hunting for data, and manual data creation.

-

“Covered” subsets automatically combine Solr data dimensions to create richer test data sets, rapidly optimising test coverage to detect bugs earlier and at less cost.

-

Synthetic test data generation augments test data as it is moved to Solr environments and is as simple as specifying Excel-like functions to add new scenarios needed in testing.

-

Model-based data generation builds intuitive visual models of the data journeys that need to be tested, applying coverage algorithms to make sure QA rigorously executes every scenario.

-

The re-usable data loads are fully integrated into DevOps pipelines, triggering “just in time” data provisions from schedulers and environment managers, via REST API, and during test execution.

-

Automated data “finds” during automated test execution hunt in back-end databases, performing accurate test assertions to validate that Solr data has been updated accurately